1. Newcomb’s Problem

1.1 Veritasium

Recently, the YouTube channel Veritasium published a video on Newcomb’s problem. There, they explore the problem, consider arguments for the possible responses, and discuss some related issues. Since this problem (and the surrounding issues in decision theory) is one of my favorite philosophical issues, I thought I would review their video and offer my approach. If you know someone connected with Veritasium, I’d very much appreciate it if you could show them this article! Also, spoiler: I am a very committed one-boxer.

1.2 The Problem

Newcomb’s problem was originally published by Robert Nozick,1 although he attributes the basic thought experiment to William Newcomb. He reportedly devised it in 1960, while working as a theoretical physicist at the University of California's Lawrence Livermore Laboratory. The problem gained mainstream popularity when Martin Gardner featured it in his “Mathematical Games” column in Scientific American in 1973. Here’s how Veritasium introduces the problem:

You walk into a room and there’s a supercomputer and two boxes on the table. One box is open and it’s got $1,000 in it. There’s no trick. You know it’s $1,000. The other box is a mystery box you can’t see inside. You also know that this supercomputer is very good at predicting people. It has correctly predicted the choices of thousands of people in the exact problem you’re about to face.

Now, you don’t know what that problem is yet, but you do know that it has been correct almost every time. Now the supercomputer says you can either take both boxes, that is the mystery box and the $1,000, or you can just take the mystery box. So what’s in that mystery box? Well, the supercomputer tells you that before you walked into the room, it made a prediction about your choice.

If the supercomputer predicted you would just take the mystery box and you’d leave the $1,000 on the table. Well, then it put $1 million into the mystery box. But if the supercomputer predicted that you would take both boxes, then it put nothing in the mystery box. The supercomputer made its prediction before you knew about the problem, and it has already set up the boxes.

It’s not trying to trick you. It’s not trying to deprive you of any money. Its only goal is to make the correct prediction. So what do you do? Do you take both boxes? Or do you just take the mystery box? Don’t worry about how the supercomputer is making its prediction. Instead of a computer, you could think of it as a super intelligent alien, a cunning demon, or even a team of the world’s best psychologists. It really doesn’t matter who or what is making the prediction. All you need to know is that they are extremely accurate, and that they made their prediction before you walked into the room. [00:26-2:14]

The relevant facts are that you are now deciding between taking only the mystery box (one-boxing) and taking both boxes (two-boxing), and you get to keep the monetary contents of the box or boxes that you take. The first box is transparent, contains one thousand dollars (hereafter, $K), and this is known to you. The second box, the mystery box, is opaque, and the contents are not known to you. Prior to you facing this problem, a predictor (supercomputer or otherwise) made a prediction about your choice, and it very reliably predicts choices like this, and you don’t take yourself to be an exception. If it predicted that you’d one-box, it put one million dollars (hereafter, $M) in the opaque box. If it predicted that you’d two-box, it puts $0 in the opaque box. All of this is known to you when you face the problem, but you do not know what prediction was made, and you do not know the contents of the opaque box. What decision would you make, and which, if either, is the rational choice?

1.3 Overview

I will explain the motivations for one-boxing that Veritasium discusses in §2 and critique them in §4.1 and §4.2. I will explain the motivations for two-boxing that Veritasium discusses in §3 and critique them in §4.3 and §4.4. In §5, I will cover some of the group rationality problems that they explore. In §6, I will analyze some remaining issues. I will conclude in §7.

2 Motivations for One-Boxing

2.1 Expected Utility

The following motivation for one-boxing is presented:

Look, I’m a reasonable guy and I like money, so I’m going to do whatever gets me the most money. So let’s go weigh the outcomes of both of these decisions. First, I’m going to say that the probability that the computer predicted my decision correctly is going to be c. And because of that the probability that it got it wrong is going to be one minus c.

So let’s look at what happens if I tried to two-box. There is a c chance of me getting $1,000 and a one minus c chance of me getting $1,001,000. If I add these two together, I get a weighted sum, which is going to tell me how much I can expect to get if I tried to two-box.

This is also known as Expected Utility or the EU of two-boxing. And I can just simplify this expression a tiny bit. So let’s look at what happens if I try to one-box. Now there’s a c chance of me getting $1 million, and there’s a one minus c chance of me getting nothing. So we can cancel this out.

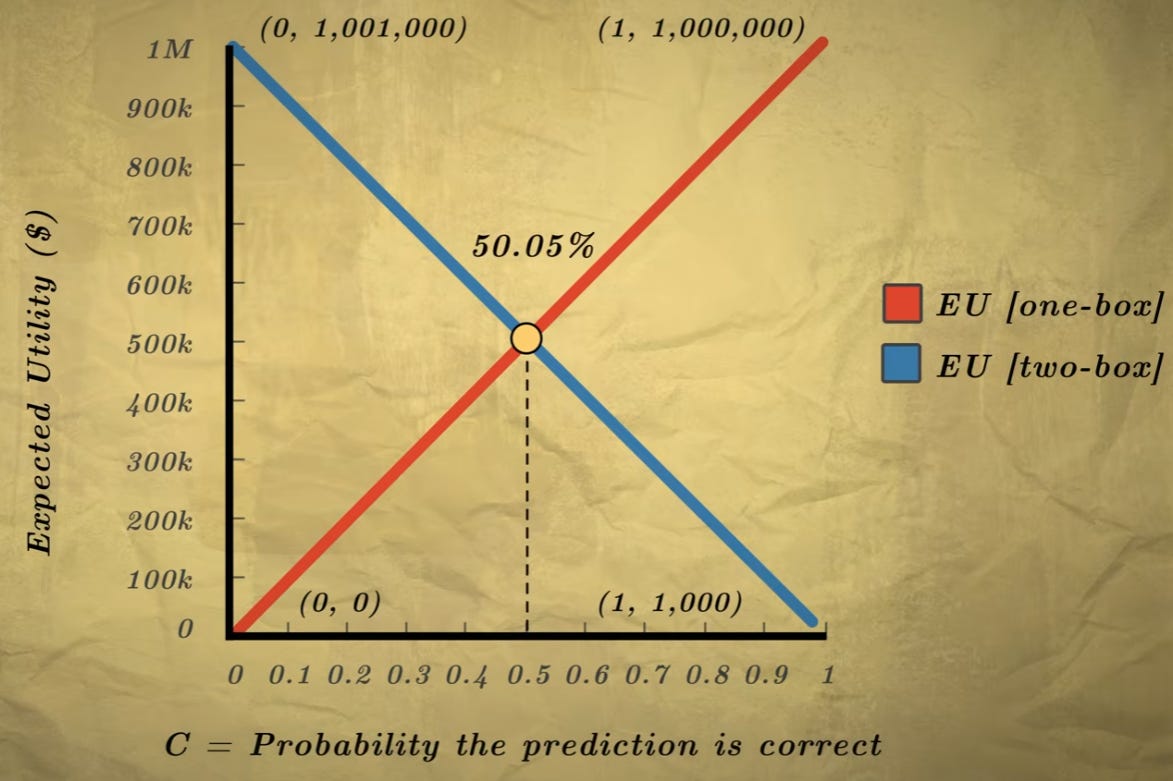

Simplify this to just million dollars times c if I equate these two expressions, I’m going to get the c at which these two expected utilities are equal. And it turns out that the c at which this happens is 0.5005 or 50.05%, which means if the computer is better at predicting than what is basically random, then the expected utility of one-box is going to be higher. [03:32-04:53]

The basic idea is that we can calculate the expected utility of one-boxing and the expected utility of two-boxing as a function of c, and use that to determine which option is preferable if the predictor is reliable (that is, c is close to 1). The expected utilities are:

Filling in the relevant values for $K and $ M and solving so that EU(one-boxing) = EU(two-boxing), we get:

That is, one-boxing and two-boxing have the same expected utility when c = 0.5005, or 50.05%. Notably, the expected utility of one-boxing is greater for higher c. Since the predictor is presumed to be much more reliable than 50.05%, one-boxing is expected to earn more money, and so is the preferable option. Here, they show the expected utility as a function of c:

While I agree with the ultimate conclusion, there are some issues with this argument, which I will discuss in §4.1.

2.2 Why Ain’cha Rich?

They also consider the ‘why ain’cha rich?’ argument, as discussed by David Lewis:2

This is known as the why ain’cha rich argument, which boils down to one super annoying question. If you so smart, then why aren’t you rich? You know, if winning is getting more money, then of course the one-boxers are going to end up better off than the two-boxers. [13:52-14:07]

The point here, although not explored much by Veritasium, is that since one-boxers fare much better on average than two-boxers, it’s more rational to one-box so that you’ll be among those who typically fare much better.

3 Motivations for Two-Boxing

3.1 Causal Dominance

Veritasium presents an argument for two-boxing as follows:

You know that the supercomputer has already set up the boxes. So whatever I decide to do now, it doesn’t change whether there are 0 or $1 million in that mystery box.

And that gives us four possible options that I’ve written down here. If there is $0 in the mystery box, then I could one-box and get $0, or I could two-box and get $1,000. But there could also be $1 million in a mystery box. And in that case, I would get $1 million if I won box, or I would get $1,001,000 if I two-box.

So I’m always better off by picking both boxes. This is known as strategic dominance, where I always pick the dominant strategy, which in this case is to two-box. So give me those boxes. [05:29-6:12]

Either there is $M in the opaque box, or there is 0$ in the opaque box, and whatever it is, your action cannot change it. If there is $M, then you get $1000 more by two-boxing than by one-boxing. If there is $K, then you get $1000 more by two-boxing than by one-boxing. Either way, you get $1000 more by two-boxing than by one-boxing, and so you should take both boxes.

3.2 Expected Utility

They go on to make another argument:

I make my decision based off something else, something a little more rational, because I believe that whatever I do now can influence and change the past. I only take into account things that I can actually influence. And clearly whatever I do now, whatever I think now, is not going to change whether that million dollars is going to be in a mystery box or not, because it was already set up before I learned about the problem.

This is known as causal decision theory, where you only take into account things that you can actually cause. And so with this, your expected utility calculation changes. And that’s because you need to use a different probability, one where you could actually cause that $1 million to be in the mystery box or not.

So right before the supercomputer made its prediction, there was some probability that it thought I was going to one-box. So let’s say that probability is p. Then that’s the probability that I’m going to use in my expected utility calculation. And the expected utility to one-box is just going to be zero plus $1 million times P.

That’s pretty good. But the expected utility for two-boxing is going to be $1,000 plus $1 million times p. But that’s just the same as the expected utility for one-boxing plus an extra thousand dollars. So no matter what the computer predicted, my expected utility is always higher by picking both boxes. So of course you’re going to two-box. [08:42-10:10]

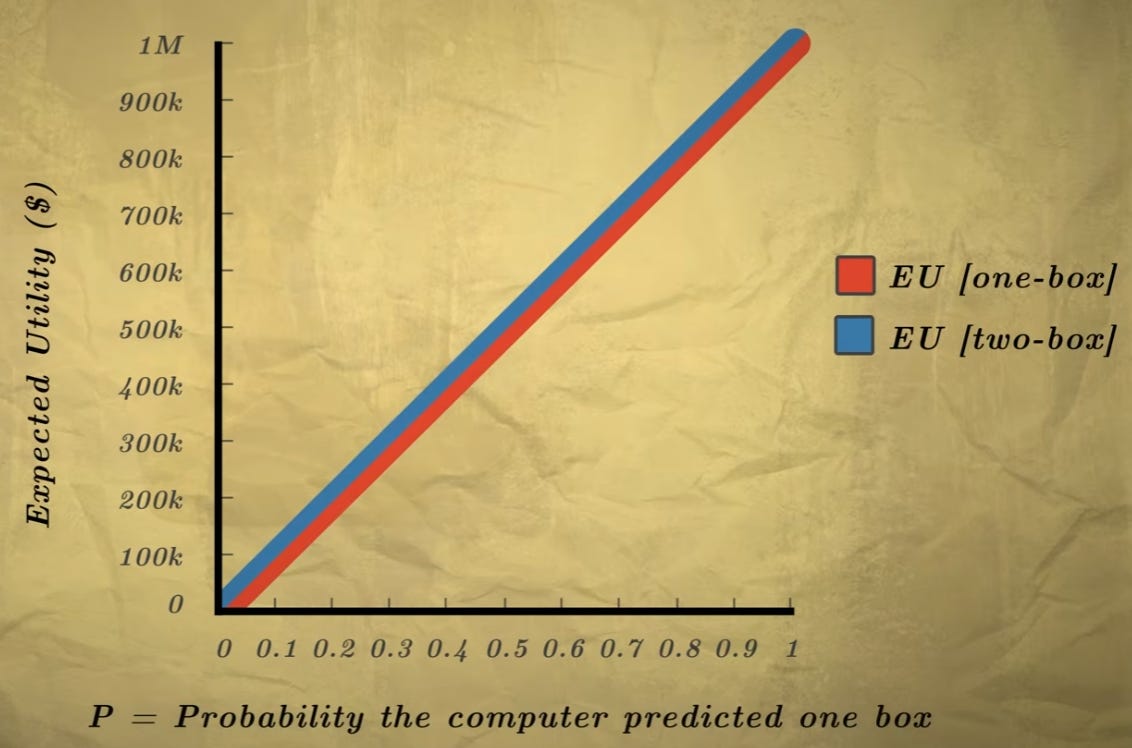

Here, the idea is that since the predictor thought that you would one-box with some probability p, and you can’t now affect that probability, we can perform the expected utility calculations with this probability. Notably, this probability is not a function of anything you think or do now, including the choice you ultimately make. We then have:

Since 1001000p is strictly greater than 1000000p for any probability p, the expected utility of two-boxing is strictly greater than the expected utility of one-boxing, and so we should one-box. Here, they show the expected utility as a function of p:

Indeed, these expected utilities reflect the fact that you’ll get an extra thousand by two-boxing than by one-boxing.

4. Criticism and Analysis

4.1 Expected Utility and One-Boxing

There are a couple mistakes in the argument here. First, the assumptions that Veritasium makes about c are not a consequence of the problem as given. I will let:

H1 = you are predicted to one-box

H2 = you are predicted to two-box

A1 = you one-box

A2 = you two-box

To say that the predictor is reliable is to say that the following probability is high:

We could also express this probability as follows:

We may suppose that P(A1|H1) and P(A2|H2) are both high, although that doesn’t strictly follow. Regardless, this is also the probability that “the computer predicted my decision correctly”, which is what Veritasium labels ‘c’. However, when they then say that,

So let’s look at what happens if I tried to two-box. There is a c chance of me getting $1,000 and a one minus c chance of me getting $1,001,000. If I add these two together, I get a weighted sum, which is going to tell me how much I can expect to get if I tried to two-box. [03:54-04:10]

This is wrong. The expected value of two-boxing, in the relevant sense, is:

The calculation performed by Veritasium assumes that P(H2|A2) = c and P(H1|A2) = 1-c, but this does not follow. Recall, c = P(A1|H1)P(H1) + P(A2|H2)P(H2). Even if we suppose that P(A1|H1) = P(A2|H2) = c, note that those are not the same conditional probabilities required for the expected utility calculation above. Indeed, it could be that P(A1|H1) and P(A2|H2) are both high, but P(H1|A1), P(H2|A1), P(H1|A2), and P(H2|A2) are such that the relevant sort of expected utility calculations favor two-boxing.3

Nevertheless, most discussions about Newcomb’s problem assume that P(H1|A1) and P(\H2|A2) are both high. However, note that if some assumption like this is not specified (or otherwise warranted), then the decision rules might not disagree, since the details could be filled in so that Evidential Decision Theory (hereafter, EDT) favors two-boxing.45 For sake of analysis, hereafter, I will assume that P(H1|A1) and P(H2|A2) are both equal to c as given.

There is a second problem with the given utility calculation, although a less serious one. In decision theory, standardly, expected value is not a function of monetary value per se, but our preferences over those outcomes. Strictly speaking, the expected utility calculation above requires an unwarranted assumption, that preference of monetary gain varies linearly with monetary value. Not only is this an unstated assumption, it’s a psychologically unrealistic one.6 For most people, there is diminishing marginal utility of monetary value.

Nevertheless, most discussions of Newcomb’s problem model monetary preference as linear. This is a fair assumption, since we could just stipulate that there is a person facing the problem with linear preference for monetary value and consider what is the rational choice for them. Additionally, even if we don’t assume that monetary preference is linear, the true preference function is unlikely to be so extreme so that the decision rules would agree. In any case, the monetary values and probabilities could be made more extreme to ensure that the decision rules disagree.

With all of this in mind, assuming that P(H1|A1) and P(H2|A2) are both high and that monetary preference is linear, what are we to make of the argument for one-boxing? In short, it’s correct. I expect to have more money if I one-box than if I two-box, and that is a strict probabilistic consequence of the problem as stipulated. Since I care about having more money, I’m going to act in a way given which I’m likely to have more money. The calculations in §2.1 are mathematically correct, and they do represent the amount of money you expect to have if you one-box vs. the amount of money you expect to have if you two-box. Since I care about having more money, I’m going to perform the action conditional on which I expect to have more money. And that is, quite clearly, one-boxing.7

4.2 Why Ain’cha Rich?

Taking for granted the probabilistic assumption discussed in §4.1, it is a consequence of the problem that one-boxers will get close to $M on average, and two-boxers will get close to $K on average.8 However, while mathematically correct, this is really just another expression of the fact that the expected monetary return of one-boxing is greater than the expected monetary return of two-boxing, and so is essentially another way to frame the argument discussed in §2.1 and §4.1.

Informed two-boxers will recognize this fact, but maintain that two-boxing is rational. Veritasium notes,

But maybe it’s not about who wins, but about what’s rational. In their 1978 paper, philosophers Gibbard and Harper argue that the rational choice is to pick both boxes, although they do admit that two-boxers will fare worse. They instead say that the game is rigged, and if someone is very good at predicting behavior and rewards predicted irrationality richly, then irrational party will be richly rewarded. But I think that’s a bit of a cop-out, because really, Newcomb’s paradox reveals something surprising that sometimes, in order to be a rational person, you must act irrationally. [14:08-14:45]

It is obviously true that irrational behavior can be rewarded. For example, I could make a bad bet (an irrational act) but get lucky and win. Similarly, I could make a good bet (a rational act) but get unlucky and lose. However, if one-boxing in Newcomb’s problem is irrational, then the rewarded irrationality in Newcomb’s problem is of a special sort, since it was expected that you would get rewarded. The subject facing the Newcomb scenario can rationally expect that they’ll be rewarded for one-boxing. Thus, on the two-boxer’s understanding of ‘rationality’, it must be possible for an action to be uniquely rational even though you expect to be worse off if you perform that action compared to some alternative.

On how I understand ‘rational action’, that is straightforwardly incoherent. It is simply not possible for the rational action to be to take the worse bet, but that is exactly what two-boxers require.9 David Lewis (a two-boxer) recognized this approach:

For it is impossible, on their conception of rationality, to be sure at the time of choice that the irrational choice will, and the rational choice will not, be richly pre-rewarded. V-irrationality cannot be richly pre-rewarded, unless by surprise. (And we did not plead surprise. We knew what to expect.) The expectation that only one choice will be richly pre-rewarded—richly enough to outweigh other considerations—is enough to make that choice V-rational.10

Here, “V-rationality” and such is the notion of rationality according to EDT. I agree with this notion of rationality. Or, more accurately, it is this notion of rationality that I care about when deciding how to act. Someone may consider other features relevant, so that what they count as ‘rational’ is sometimes about certain causal chains, non-backtracking counterfactuals, or something else that allows the ‘rational action’ to be one given which they expect a worse outcome. That’s fine with me: they can use the phrase ‘rational action’ to capture what they have in mind, and act ‘rationally’ in their intended sense. I’ll return to this in §4.4 and §7.

Veritasium also said, as I quoted, that “Newcomb’s paradox reveals something surprising that sometimes, in order to be a rational person, you must act irrationally.” Depending on exactly how “acting irrationally” is understood, I might agree with this, but I do not think that Newcomb’s problem reveals this. I will revisit this in §5.2.

4.3 Causal Dominance

In the video, Veritasium calls their dominance argument “strategic dominance”. However, I prefer to call this sort of argument a causal dominance argument. After all, there are different sorts of dominance arguments, some of which I would accept.

The problem with the causal dominance argument, in short, is that there is what’s called ‘act-state dependence’. To help illustrate the problem, consider an uncontroversially illicit application of dominance-style reasoning. Suppose that you’re considering whether to get a particular vaccine. The vaccine is quite effective at preventing some disease, and most people who don’t get the vaccine contract the disease. While getting the vaccine incurs some small cost to you, getting the disease incurs a significant cost. However, you might reason, you should not get the vaccine, since not getting the vaccine dominates getting the vaccine. After all, if you don’t get the disease, then you’re better off not having incurred the cost of getting the vaccine, and if you do get the disease, then you’re still better off not having incurred the cost of getting the vaccine. Either way, you’re better off not getting the vaccine, and so you should not get the vaccine.

This conclusion is wrong, of course; you should get the vaccine. You may be quick to point out that in the vaccine scenario, whether you get the vaccine is causally relevant to whether you get the vaccine, and dominance arguments only applies in general when the relevant state is not causally dependent on how you act.

I think this is close, but doesn’t capture exactly what’s wrong with the given simple dominance argument. Rather, the problem is that whether you get the vaccine is not probabilistically independent from whether you get the disease. In other words, it’s more likely that you get the disease if you get the vaccine than if you don’t. The dominance argument against getting the vaccine is illegitimate because there is probabilistic dependence of the relevant states on the act that you perform.

Consider a similar case. In this version, we might suppose that the vaccine is very unusual in that while it’s in a sense very “effective”, it is causally irrelevant to whether you get the disease. Nevertheless, the vast majority of people who get the vaccine avoid the disease, and the vast majority who do not get the vaccine contract the disease. Although unusual, the scenario is at least coherent. We might suppose that all of our expectations regarding what will happen in this modified vaccine scenario are exactly the same as our expectations regarding what will happen in the original vaccine scenario except that, of course, the connection between our getting the vaccine and our getting the disease does not count as causal. The point is, we have just as much confidence we’ll avoid the disease if we get the vaccine (and so on) in both versions.

To me, it is quite plain that the decision-theoretically relevant facts are exactly the same in both vaccine scenarios. In both cases, you should get the vaccine precisely because it’s much more likely that you’ll get the disease if you do not, and you care most about not getting the disease. Accordingly, in both cases, the simple dominance argument is fallacious. Note that the reason it is fallacious has nothing to do with causation (since that is absent in the second vaccine case), but because they both involve act-state dependence.

This is why causal dominance arguments can go wrong, and do go wrong when applied to Newcomb’s problem. Valid applications of dominance require act-state independence, but causal dominance arguments do not require that. In Newcomb’s problem, there is act-state dependence, and so the given causal dominance argument is illegitimate.11

4.4 Expected Utility and Two-Boxing

Veritasium spends some time noting that our thinking or action now does not causally influences the predictor, and it does not alter the contents of the opaque box. This is correct, of course, but this shouldn’t motivate calculating expected utilities in some other way.

Let’s suppose that you initially think that there’s a probability p that you were predicted to one-box. It would be false to say that the expected monetary return of one-boxing is p⋅$M and the expected monetary return of two-boxing is p($M + $K) + (1-p)($K). Those expressions would be correct only if p is probabilistically independent from your act, which is not satisfied here. The correct calculations for expected monetary value are the same as the expected utility calculations given in §2.1 and §4.1.

As an illustration, imagine you were facing Newcomb’s problem, and many smart investors were watching you make your choice. They observe that you two-box, but they don’t know what’s in the opaque box. Then, without revealing the contents of the opaque box, you offer them a chance to pay you to get all of the money for themselves. What do you think they would bid, and how much do you think they would bid had you one-boxed instead? Of course, they would bid significantly more if you one-box, since they recognize that it’s much more likely that the opaque box contains $M if you do.12

A proponent of two-boxing might grant this, pointing out that they aren’t intending to calculate expected monetary value, but expected utility in some sense that is distinct from evidential expected utility. Two-boxers are trying to maximize this other sense of expected utility (which we might call ‘causal expected utility’). Note what I said toward the end of §4.2. It might be that they have some other notion of ‘rational action’ that is concerned with maximizing this other notion of expected utility. Someone can use ‘rational action’ in that sense and use it to guide their actions accordingly.

However, I find it utterly mysterious. In Newcomb’s problem, I’m concerned with having more money, and so I want my decision rule to track the evidential expected utilities. In other words, it should be that the rational action is the one conditional on which I expect the most preferred result. The two-boxer’s notion of ‘rational action’, and the corresponding decision rule, does not guarantee this. By their own admission, that is violated in Newcomb’s problem. Accordingly, they are free to act in a way that maximizes some other utility function, whereas I will act in the way that maximizes how much money I rationally expect to have. If that’s what you’re concerned with too, congratulations: you’re a one-boxer!

5. Group Rationality

5.1 The Prisoner’s Dilemma

Veritasium also introduces the prisoner’s dilemma, saying

In the prisoner’s dilemma, you and another player compete for money by either cooperating or defecting. If you both cooperate, then you get three coins each. But if you defect and your opponent cooperates, then you get five coins and they get nothing. And if you both defect, you get one coin each. So no matter what your opponent does, you are always better off by defecting.

But if you play this game, not once but repeatedly, then everything changes. All of a sudden, you’re better off by cooperating. [15:02-15:33]

I agree with this, unless you expect your opponent’s actions sufficiently correlate with yours. In other words, perhaps it’s more likely that they’ll cooperate if I cooperate, and more likely that they’ll defect if I defect. In that case, the expected monetary returns for cooperating may exceed the expected monetary returns for defecting, and so I should cooperate even in the one-shot prisoner’s dilemma.1314

5.2 Mutually-Assured Destruction

Veritasium continues,

But there are some realistic scenarios where staying true to a worse option could have deadly consequences. On the 29th of August 1949, the Soviet Union detonated the one bomb as part of their first nuclear weapons test. This sent the US and the USSR into a furious arms race. By the mid 1960s, the US had over 30,000 nuclear warheads and the USSR had just over 6000.

Both sides were more than capable of destroying the other. The US Secretary of Defense at the time, Robert McNamara, didn’t advocate for disarmament. Instead, he recommended a strategy of assured destruction where the US should be able to deter a deliberate nuclear attack by maintaining a highly reliable ability to inflict an unacceptable degree of damage upon any single aggressor.

This strategy eventually became known as mutually assured destruction, or MAD. If either country attacked first, the other would surely retaliate and lead to total annihilation of both sides. So having that commitment to retaliate is beneficial. It stops the attack from happening in the first place. [16:50-17:59]

This is a rather interesting sort of decision problem where what you rationally do at one time might incline you to do something at a later time which, narrowly considered, is irrational. As a host on Veritasium points out, perhaps the best approach is to appear that you would follow through with the counter-attack, but not actually do it if prompted.

In a realistic scenario, this may suffice, but we could modify the details so that it’s actually not the best strategy. Suppose that the opponent is very good at determining whether we would retaliate. And if they determine that we will likely not retaliate, they will likely strike first, and otherwise they will not strike first. In this case, keeping up appearances is not enough unless we are actually inclined to retaliate. Accordingly, the rational approach is to develop our psychology (and/or the relevant infrastructure) so that we actually would be inclined to retaliate. If we succeed in developing our psychology (and/or the relevant infrastructure) in this way, we would retaliate.

Considered on its own, that retaliation is technically irrational, but it’s admissible that we act irrationally on that occasion because our doing so (if that circumstance arises) was a consequence of the best overall strategy for dealing with the threat of nuclear war. Put one way, this is the best strategy because although it does create a chance of maximum destruction (worst outcome), by creating that chance we make it much less likely that there are any nuclear attacks, which is the best outcome. A speaker on Veritasium makes a good point along these lines, saying,

There’s this other game theory interaction, the game they call chicken. You’re both driving your cars at each other. The worst thing is if neither of you swerve because then you both die. But you win if the other person swerves and you don’t. The best strategy in this game is to visibly take the steering wheel out of your car and throw it out the window so that the opponent can see that you’ve done that. [19:10-19:30]

In the nuclear case, the analogous strategy would be to take the control of retaliation out of our hands so that we would retaliate automatically, and convince nuclear powers that we’ve set up our infrastructure in this way. This idea was explored, as Veritasium points out, in the movie “Dr. Strangelove”.15 The point of these cases is that it can be rational to do something that will convince our opponent that they’ll be worse off if they strike (or stay the course, etc.) even if us convincing them of that risks the worst possible outcome.

6. Other Issues

6.1 Free Will

Veritasium says,

For example, the only way you’re going to win this game is to already be the kind of person to one-box, but then two-box at the last second anyways, that’s the only way you’re going to get the million and a thousand dollars. [11:44-11:57]

This is not correct. It’s not merely that the predictor is reliable at tracking some general disposition, or “what kind of person you are”. It’s not even required that the predictor knows any of that. Rather, the predictor is reliable at tracking what act you actually perform, be that one-boxing or two-boxing. Thus, you should expect that if you are the “kind of person to one-box” but two-box at the last second, the predictor likely will have predicted that you would two-box, and you will leave with $K only, not $M + $K.16

They continue,

If the predictor is so good, let’s say it’s 100% accurate, then that’s not even possible. Would you say that’s true? Yeah. Then a follow up question is, if such a perfect predictor would exist, does that mean that free will doesn’t exist because you’re saying there’s nothing you can do in between walking into that room and making your decision that ends up changing what was predetermined?

That’s right. And maybe this reveals where I’m coming from. And I think where I’m coming from is maybe free will doesn’t exist. I come down on this point of like, free will is an illusion, but our world operates in a way that is indistinguishable from free will being real. And therefore you have to act as though it’s real, as though it’s 100% real.

If we think that free will is not real and it’s an illusion, and then you have someone who’s committing crimes, and then you want to say, well, that’s not his fault. Therefore, instead of putting, you know, murderers in jail for 25 years, we’re just going to, give them some gardening classes or something like that, the problem is that then changes the environment where everyone knows you can kill someone and you can go to like, do the gardening.

So you can’t change the system based on the knowledge that it’s an illusion. Whether we do or don’t have free will, you have to live as though it exists. [12:00-13:20]

It would’ve been interesting if they had discussed the perfect predictor case further. How should we analyze the case where the predictor is inerrant (never in fact makes a false prediction) or infallible (could not make a false prediction)? In particular, if we think that one-boxing is rational in the perfect predictor case (for at least one version), but two-box in the imperfect predictor case, that suggests a rather strangely discontinuous strategy. After all, it doesn’t seem that there should be a decision-theoretically relevant difference between the case where the predictor is 99% reliable and the case where the predictor is 100% reliable.17

Of course, I say the same thing in the limit case as in the standard case. If P(H1|A1) = P(H2|A2) = 1, then I should view my choice as between a certain $M and a certain $K, and so should one-box accordingly.

However, it might be objected that the limit case undermines free will, and perhaps isn’t even coherent.18 I reject both conclusions. In particular, I reject that freedom (and rational choice) requires that there be what Fischer calls “accessible” worlds where I perform at least two distinct actions. However, since the main subject of this post is not free will, I will not explore this further here.19

6.2 Pre-Commitment

Veritasium ends by considering how we might act as if we had a precommitment, saying,

The question isn’t how to act. The question is what rules are one to follow? Or how does one even decide what rules to follow? Sometimes it’s put in the form of if you knew that you were a robot with programing that you could set, and you could rewire yourself to make yourself obey, one set of rules rather than another, the question is, what sort of rules would you wire yourself to obey?

And what you would do is you would make yourself into the kind of creature that sort of always acts in line with the commitments that would have been good to form had you even known about the problem. When you’re in a situation like the Newcomb case, you would end up finding yourself think, if I had been able to make a pre-commitment, what pre-commitment would have been the good one to make? The good pre-commitment to make would have been to be a one-boxer.

And since I’ve already wired myself up to be the kind of person that lives up to all the pre-commitment, I would have made, then I’m already in effect, committed to one-boxing, even though I didn’t realize it. [20:46-21:50]

If I could rewire myself so that I was sure to follow some decision rule, it would be that given by EDT. Presumably, there’s some rule or rules that I think correctly capture which actions are rational, and so if I could decide to make myself follow some rule, it would be that. However, I would think that someone who favors Causal Decision Theory (CDT20, or some other decision rule) would answer with their preferred decision rule instead. As such, frankly, I’m not sure what the point here is supposed to be.21

7. Conclusion

In this post, I’ve explored Veritasium’s presentation of Newcomb’s problem, and some of the common issues related to that problem. While there were several problems with their video, I still found it pretty good overall. The problem and main arguments they consider were discussed in §1, §2, and §3. In §4, I provided my analysis, revealing my approach. I’m a very committed one-boxer and proponent of EDT.22

In Newcomb’s problem, all I’m concerned with is having money, and I prefer more money to less. It straightforwardly follows from the statement of the problem (given the qualification in §4.1), that I expect to have more money if I one-box than if I two-box. Accordingly, I one-box. As discussed in §4, I just understand the phrase ‘rational action’ in a way such that it’s incoherent to suppose that the rational action is the one given which I expect to be worse off. My notion of rationality is, after all, what Lewis calls V-rationality.23

I do not suppose that I have shown that this is the ‘correct’ sense of the term or that two-boxers are wrong. Two-boxers are, after all, free to tie their notion of rational action to things other than having what they want. On their notion of rationality, there are cases (like Newcomb’s problem) where the “rational action” is one given which they expect to be worse off. I find that quite absurd, but have not here offered much to persuade them apart from explaining my approach.

It may seem that the prospects for breaking the stalemate are bleak, and we’ll be left with groups of people following consistent but divergent decision rules, proclaiming their actions ‘rational’ in their respective sense. Perhaps, although the relevant philosophical literature continues anyway. There is much discussion on other decision problems, some of which many people find that certain decision rules give unintuitive recommendations.24 It’s also possible to explore other principles/features sometimes associated with rationality, like ratifiability, dynamic consistency, reflection, and resistance to Dutch books. Additionally, it may be that exploring broader philosophical issues, like modality and counterfactuals, probability, free action, and so on, will move people one way or another.25 Regardless, I am confident in my decision to one-box, and I hope you are too!26

If you enjoy posts/content like this, please consider supporting by becoming a paid subscriber on Substack! Also, consider checking out my YouTube channel. All of my links can be found here. Anyway, thanks for reading, and I hope you got something out of it!

Lewis (1981). See also Gibbard and Harper (1978).

For more on this, see Levi (1975).

For example, suppose that 95% of people who faced Newcomb’s problem one-boxed, and the predictor only ever predicted that people would one-box. In that case, the predictor is reliable in that it is correct 95% of the time. However, given these additional details, the evidential expected value of one-boxing is $M and the evidential expected value of two-boxing is $M + $K.

For an introduction to Evidential Decision Theory (EDT), see Jeffrey (1965), Ahmed (2021).

For example, for most people, the preference for $K over $0 is much stronger than the preference for $M over $M-$K.

Also, as will later be discussed, there are different notions of ‘expected utility’. The sort of expected utility employed here is called ‘evidential expected utility’.

Letting c = 0.95, for example, the average monetary return for one-boxers is $950K, whereas the average monetary return for two-boxers is $51K.

By ‘worse bet’, I mean a bet where you expect to be worse off relative to what you expect to get from another bet. For example, if I expect to gain $10 if I take bet 1 and I expected to gain $20 if I take bet 2, then bet 1 is the worse bet. Two-boxing is a ‘worse bet’ because you expect to get less money if you take that option compared to if you one-box.

There would be act-state independence if P(H1|A1) = P(H1|A2) = P(H1), but that is violated by construction. For more on this, see Levi (1975). For a broader discussion and defense of EDT, see Ahmed (2014).

For more on this, see Seidenfeld (1984).

Indeed, the one-shot prisoner’s dilemma is quite similar to, if not structurally the same as, Newcomb’s problem. See Lewis (1979) for an argument that they are structurally the same, although see Bermúdez (2013) for an objection.

For an evidentialist approach to the prisoner’s dilemma, see Ahmed (2014), pp.108-119.

In fact, the Soviets eventually built something that sort of functioned like this, called the “Perimeter” system, or “Dead Hand”. However, it’s unclear to what extent that system is automated.

That said, it’s compatible with the standard Newcomb that the predictor is exploitable in that way, and perhaps a small fraction of people act/think in ways given which the predictor is more likely to have made the wrong prediction. However, there being such an exploit and it functioning in the way that Veritasium suggests is not supported by the case as stated, and not in the spirit of the problem.

Or, for arbitrarily small ε, where P(H1|A1) = 1 - ε, and so on.

For papers arguing (in similar fashion) that the limit case is malformed, see Hubin and Ross (1985) and Fischer (2001). For papers arguing that the standard Newcomb case is malformed, see Maitzen and Wilson (2003), Slezak (2005). For a more radical approach, see Priest (2002). For a response to the concerns raised about the standard Newcomb problem, see Burgess (2012).

For a criticism, see Ahmed (2014). For related discussions, see Weintraub (1995), Craig (1987).

For an introduction to Causal Decision Theory (CDT), see Gibbard and Harper (1978), Weirich (2024).

That said, some of the phrasing from Veritasium is reminiscent of Functional Decision Theory (FDT), as developed by Yudkowsky and Soares (2017). However, while perhaps interesting, I do not consider that a serious work or a serious approach to decision theory.

That said, it may be that in some cases, EDT makes unrealistic modeling assumptions that may need to be relaxed. Nevertheless, as an idealized guide, I take the basic idea to be correct.

For an opinionated discussion of many such problems, see Ahmed (2014).

One of my favorite articles to help “intuition-pump” EDT-style reasoning is Leslie (1991).

For a discussion of further issues with CDT, see Horwich (1985).

I was reading the Stanford Encyclopedia's entry on Causal Decision Theory after reading this, since I am trying to understand why anyone would argue for taking both boxes. It just seems silly to conclude that taking two boxes is the dominant strategy, given that it has a 0% chance of it yielding $M + $T and a 100% chance of yielding $T. If a strategy has a 0% chance of yielding a higher value than the opposing strategy, then that strategy is not dominant. Saying, "but if a person took two boxes when the computer predicted one box, they would be better off" is irrelevant since that would entail a contradiction, since the problem assumed that this scenario is infeasible. I also don't see how this destroys decision theory, since you only have two choices, get $M or get $T. The other "possibilities," have a 0% chance of occurring. What is the debate really about, then? Is it something to do with how the word "possibility" is being used (e.g., can there be logically possibly outcomes with 0% probabilities?)? Does the fact that the computer "merely predicts" instead of causes create skepticism of the one box option? Of course, this is an unrealistic problem, since maybe perfect prediction is not actually possible without causation, but if the problem is unrealistic, then what is the issue with the rational choice being unrealistic?

You should write something or schedule a debate with Yudkowsky on FDT. I don’t think it holds up well for the reasons Wolfgang points out but I’d be interested in what you have to say.